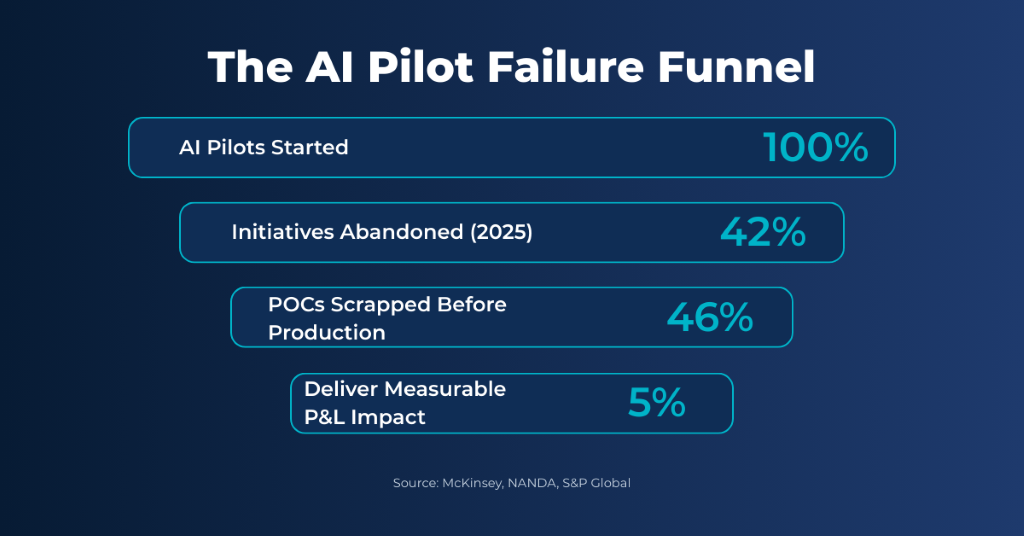

95% of generative AI pilots fail to deliver measurable P&L impact.

This is (no fluff) data from MIT’s Project NANDA, based on 150 interviews, 350 employee surveys, and 300 public AI deployments:

- The number of companies abandoning most AI initiatives jumped from 17% in 2024 to 42% in 2025.

- The average organization scrapped 46% of AI proof-of-concepts before they ever reached production.

The pattern is consistent across industries.

AI arrives as an experiment – meaning – a customer support team testing a language model, a developer generating code, a compliance analyst summarizing regulatory updates.

Each step makes sense. Quick wins accumulate.

Then something shifts.

What started as isolated experiments quietly embeds itself into core business processes: customer service, software development, operations, compliance, decision-making support.

AI stops being a project and starts becoming infrastructure.

And this is precisely where most enterprises are stuck.

The Experimentation Trap

Here’s an uncomfortable truth: experimentation doesn’t scale.

What does that mean?

Enterprises – across industries – are rapidly adopting LLM’s and emerging standards like MCP’s (Model Context Protocol) to connect AI to real workflows.

The intent is seems very clear.

Make AI useful inside systems that actually matter.

But the “execution part” tells a different story.

Most organizations are still using experimental integration approaches for systems that operate directly inside revenue, risk, and regulatory workflows. That mismatch creates friction and exposure at scale.

The result? Enterprise AI looks powerful on the surface. Underneath, it’s messy, risky, difficult to observe, and increasingly expensive.

Average monthly AI spend $85,521 in 2025 — a 36% increase from $62,964 the previous year. 45% of organizations now spend over $100,000 monthly on AI, up from 20% in 2024. And most can’t answer basic questions: Which team is driving costs? Which workflows deliver value? Where is spend increasing and why?

Five Failure Points Hiding in Plain Sight

On paper, AI adoption looks like progress. In practice, most enterprises are accumulating technical and organizational debt faster than they can realize value.

1. Fragmented Teams, Fragmented Systems

Different teams adopt AI independently. Each selects its own models, tools, vendors, and workflows. What forms isn’t innovation — it’s silos.

Documentation becomes inconsistent. Valuable lessons stay trapped within individual teams. Systems become harder to audit, harder to improve, and nearly impossible to reason about at the enterprise level.

No shared architecture. No shared standards. No shared learning.

2. Governance Models That Don’t Translate

Existing governance frameworks were built for deterministic systems—traditional software, APIs, rules-based logic. AI doesn’t behave that way.

These models are probabilistic. Outputs vary. Context changes results.

Applying old governance rules to new AI systems creates security gaps, compliance blind spots, and manual reviews that can’t scale. Only 18% of organizations have an enterprise-wide AI governance council. Meanwhile, 480+ bills referencing AI have been enacted at the state level in the US alone, and the EU AI Act takes full effect by 2026 with fines up to €35 million or 7% of global revenue.

Governance exists. It just doesn’t work.

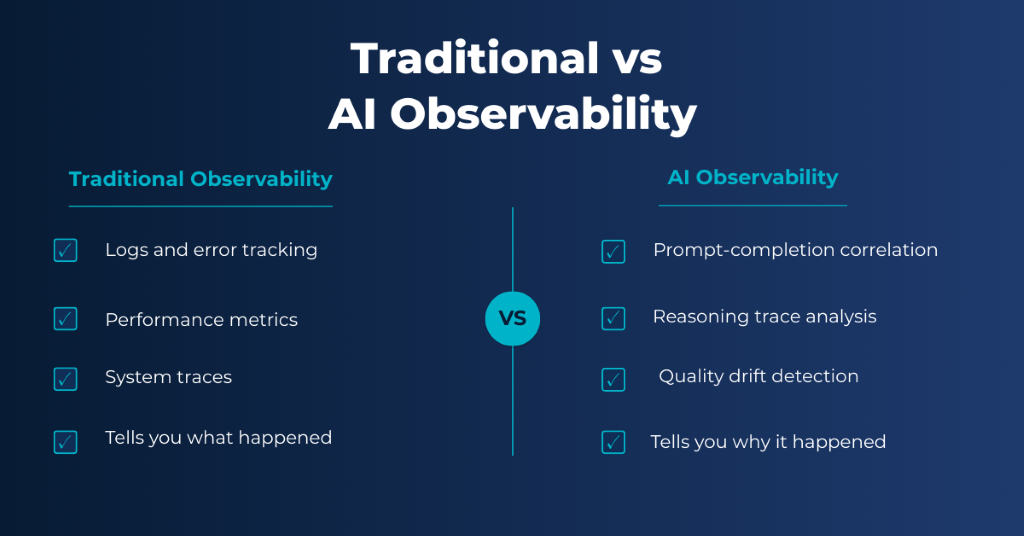

3. Observability That Misses the Point

Traditional observability tools tell you what happened. They don’t tell you why .

With AI systems, logs don’t explain reasoning. Errors don’t follow deterministic patterns. Performance therefore degrades silently over time. Teams struggle to detect quality drift, understand model behavior, or trace failures across multi-step AI workflows.

And by the time anyone notices, it’s usually a customer or a regulator raising the alarm—and at that point, the damage has already been done.

4. Shadow AI Running Wild

80% of workers use unapproved AI tools—including 90% of security professionals. 38% of employees share sensitive data with AI without approval. 77% paste data into GenAI prompts, 82% are from unmanaged accounts.

This isn’t a training problem. When you look at it deeply, It’s in-fact an architecture problem.

When there’s no centralized way to access AI, teams find their own paths—and shadow AI starts to creep in..

AI-associated breaches now cost over $650,000 per incident. Shadow AI leaking EU customer data without consent can trigger GDPR fines up to 4% of global revenue.

5. Costs Spiraling Without Visibility

AI cost structures are fundamentally different from traditional software. Usage-based pricing, multiple vendors, and distributed adoption make it nearly impossible for finance teams to track what’s actually happening.

Strategic optimization can reduce AI expenses by 50-90%. Enterprises report 42% reductions in monthly token costs through storage alone. Prompt optimization delivers 40-50% token usage reduction. Model cascading—routing simple tasks to cheaper models — cuts costs by 60%.

But none of that matters if you can’t see what you’re spending or where.

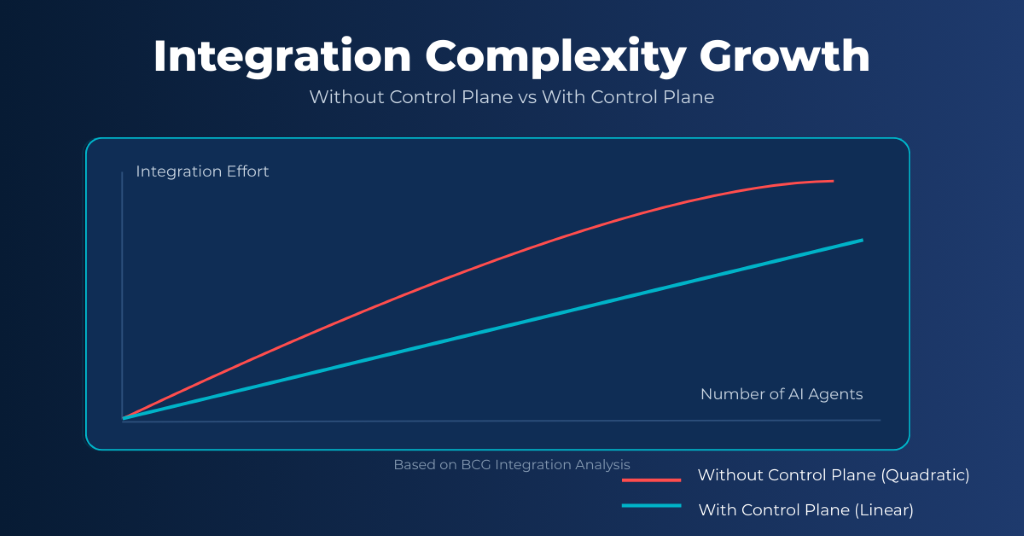

Why “More Tools” Makes It Worse

In response to all this, enterprises do what they know best: buy more tools.

One team deploys a monitoring solution. Another builds a policy engine. A third writes custom orchestration code.

Individually, these solutions make sense. Collectively, they fail.

This approach creates:

- overlapping capabilities,

- inconsistent enforcement,

- high maintenance overhead,

- and fragile integrations that break as systems evolve.

Without a unified approach, integration complexity rises quadratically as AI agents spread. With a control plane, integration effort increases only linearly.

The MIT NANDA research is clear on this point: purchasing AI from specialized vendors succeeds about 67% of the time. Internal builds succeed only one-third as often.

The real gap isn’t about what AI can do, it’s about how it’s built into the system and works at scale .

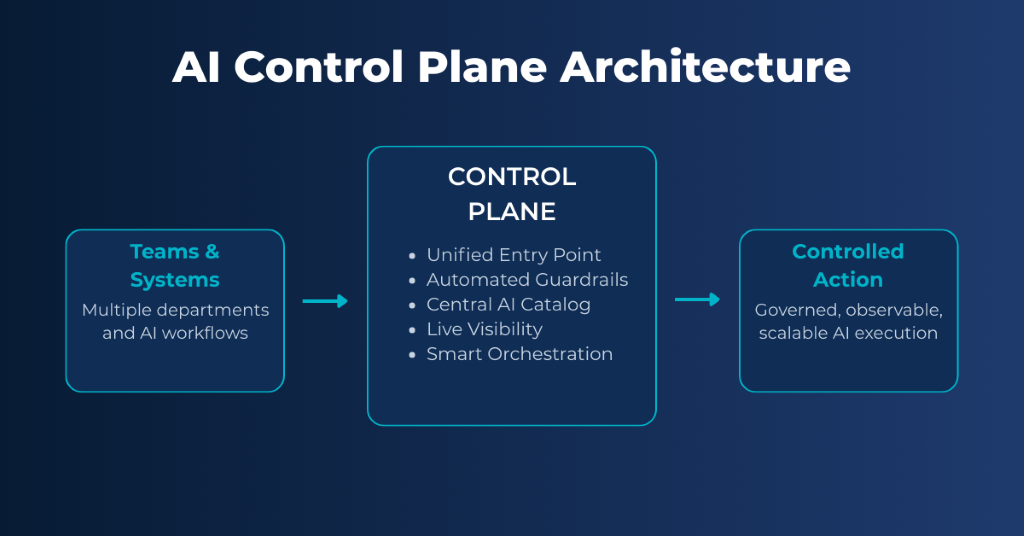

The System (Architectural) Shift: Tools -> Control Plane

When we look at it – enterprises don’t need another tool at all. They only need one central place to run and govern AI.

A system that standardizes how AI is

- accessed

- governed

- observed, and

- scaled across teams, models, and vendors.

This is the same architectural evolution enterprises made with cloud infrastructure, APIs, and identity management. AI is simply the next layer.

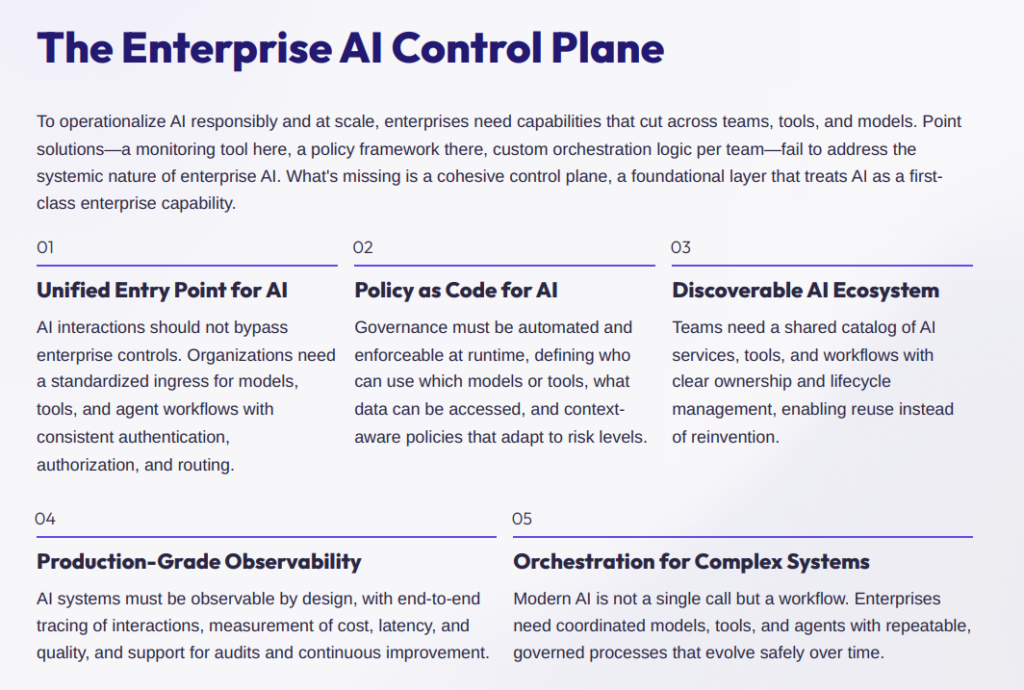

What a Modern AI Control Plane Requires

5 things.

1. Unified Entry Point. All AI traffic flows through a single gateway. Security policies are enforced “by default.” Compliance is consistent. Shadow AI usage is removed completely. No exceptions. No side doors.

2. Automated Guardrails. Policies shouldn’t live in documents. They should live as code. By converting governance rules into automated guardrails, enterprises can enforce access control, risk thresholds, and usage constraints in real time—without slowing teams down.

3. Central AI Catalog. Instead of every team reinventing workflows, enterprises need a shared library of approved models, validated tools, and reusable AI workflows. This reduces duplication and accelerates adoption without sacrificing safety.

4. Live Visibility. Observability must extend beyond logs. Enterprises need continuous visibility into cost consumption, model performance, output quality, and usage patterns. When visibility is built in, auditing and optimization become less reactive & more routine like.

5. Smart Orchestration. As AI workflows become multi-step and multi-model, orchestration matters. A standardized orchestration layer ensures reliability across complex flows, traceability for audits, and safe coordination between models and systems.

Platform Alone Isn’t Enough

Here’s the part many enterprises underestimate.

The challenge with AI goes beyond technology. It requires real organizational change.

A successful shift from experimentation to enterprise capability requires architectural guidance, governance design, organizational alignment, and continuous optimization as models and regulations evolve.

That’s why the strongest approach combines platform capabilities with deep enablement, ensuring AI systems are not only deployed, but operated sustainably over time.

McKinsey’s 2025 AI survey found that organizations with “significant” financial returns are twice as likely to have redesigned end-to-end workflows. Before modeling techniques even come into play, successful companies invest in the foundation. They win not by adding tools, but by building stronger architecture.

Where Fyrii Fits

This is exactly the gap Fyrii was built to address.

We function as an enterprise AI control plane — a unified layer that sits between AI models and business systems, giving organizations centralized control without slowing innovation.

In other words: AI can think. Fyrii helps it act — safely, visibly, and at scale.

By:

- unifying entry points

- automating guardrails

- centralizing observability

- and orchestrating complex workflows

We transform fragmented AI usage into (powerful) enterprise-grade capability.

Not just another tool. A foundation.

The Path Forward

AI is no longer optional. And it’s no longer experimental.

For CIOs and CTOs, the question isn’t whether to adopt AI. That’s already settled. The question is whether current approaches create strategic advantage or operational liability.

That distinction comes down to 3 principles:

Build governance into the foundation. Retroactive controls don’t work at scale. Governance must be embedded, automated, and enforceable by design.

Treat AI as infrastructure. If AI supports core operations, it deserves the same architectural rigor as cloud, networks, and identity systems.

Enable innovation within boundaries. The goal isn’t restriction—it’s safe speed. When teams know the rules and the platform enforces them, innovation accelerates instead of stalling.

The Bottom Line

Enterprises — especially in regulated sectors like fintech—are at an inflection point.

Those who continue treating AI as a collection of experiments will accumulate risk, cost, and complexity. Those who treat it as infrastructure — investing in the right control plane — will build durable advantage.

The future of enterprise AI belongs to platforms that balance speed with control, innovation with responsibility, flexibility with trust.

The architectural decisions made today will determine who gets there first.

—

If you’re navigating the shift from AI experimentation to enterprise capability, let’s talk about what control looks like at scale.

Sources

- MIT Project NANDA — 95% of AI pilots fail to deliver P&L impact; 67% vendor success vs 33% internal builds. Fortune

- S&P Global — 42% abandoned AI initiatives in 2025 (up from 17% in 2024); 46% of POCs scrapped. WorkOS

- CloudZero — Average monthly AI spend $85,521 in 2025; 45% spending over $100K/month. USM Systems

- UpGuard — 80%+ workers use unapproved AI tools; 38% share sensitive data without approval. Cybersecurity Dive

- IBM — AI-associated breaches cost over $650,000 per incident. ISACA

- McKinsey — Only 18% have enterprise AI governance council; 2x returns for workflow-first organizations. NAVEX

- Harvard Ethics — 480+ enacted AI bills at state level; EU AI Act fines up to €35M or 7% revenue. Harvard

- Unified AI Hub — 50-90% cost reduction through optimization; 60% savings via model cascading. Unified AI Hub

- LangChain — 89% have observability for AI agents; 32% cite quality as top production barrier. LangChain